-

Let’s not forget a Drupal 10 with DDEV

Of course all of this is availble from the DDEV documentation. To get a local version of Drupal 10 running you will of course need to run these commnds from a folder where you want your Drupal 10 project to be installed. I will also remind you that you must get DDEV installed with a…

-

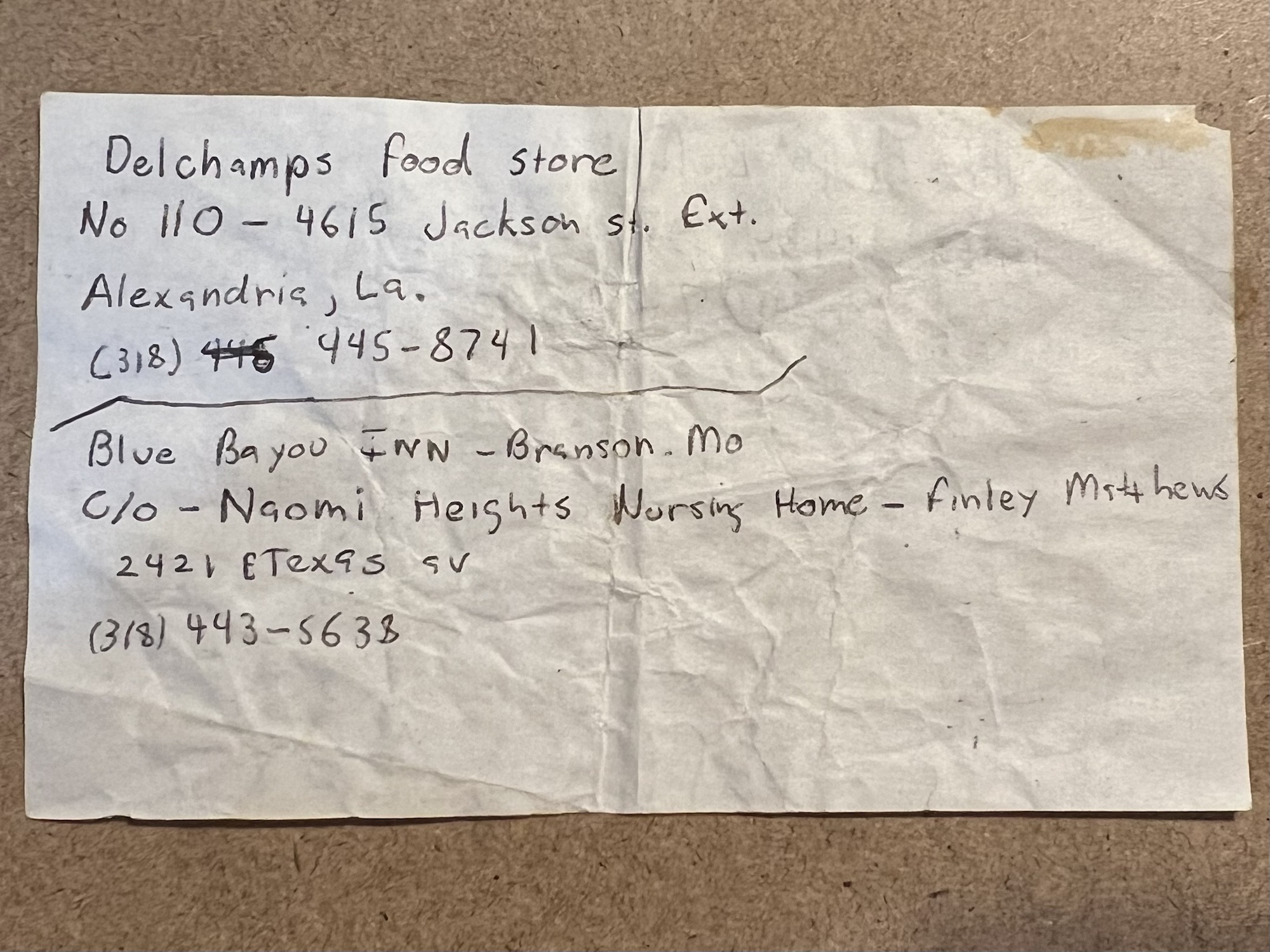

The intern resume 1994

It was so last century when your applications were hand typed at the library on a typewriter and copied for 10¢ Minus my freelance creative work as a minor of course. Those dnd books weren’t cheap.

-

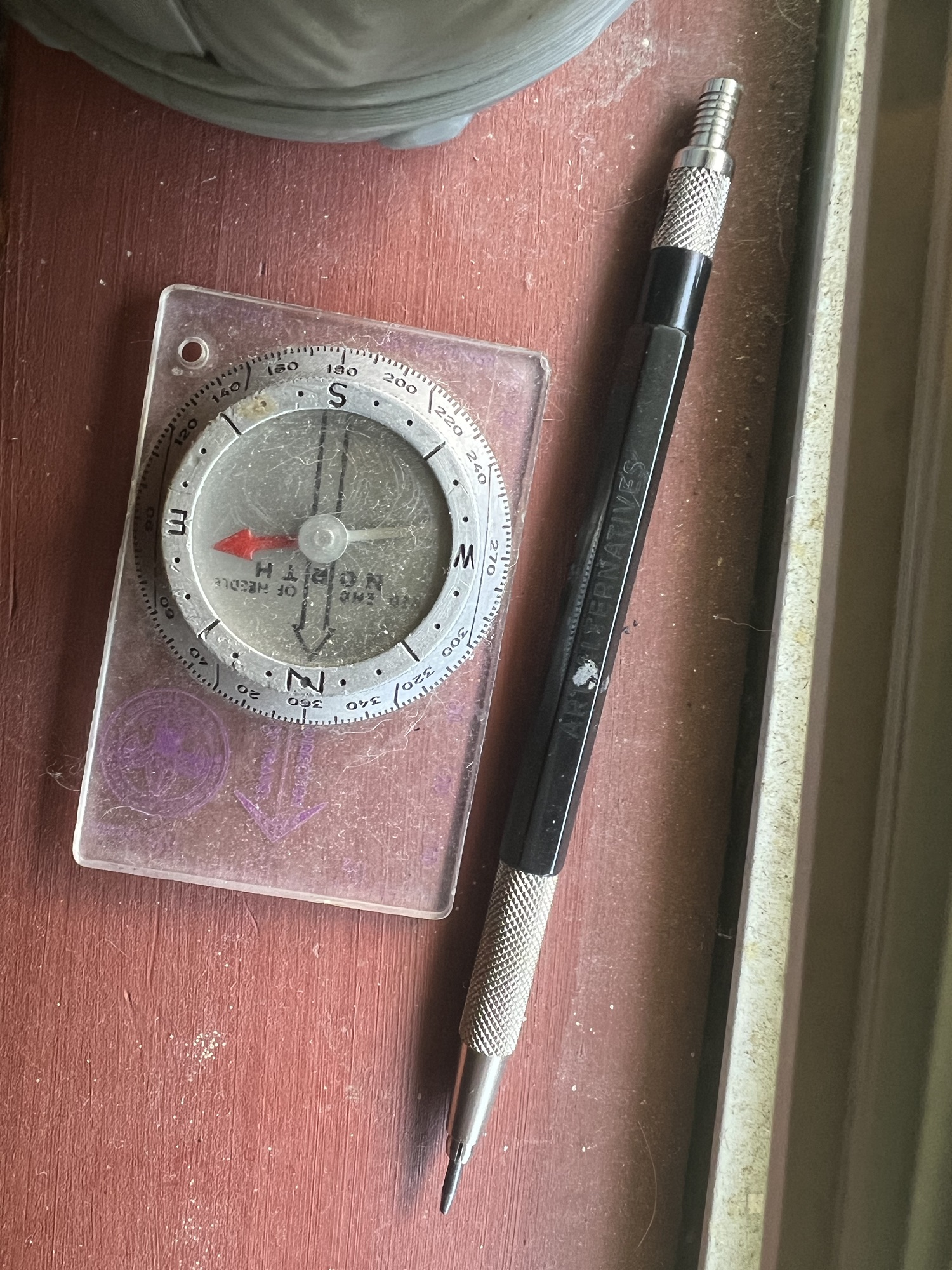

Directions

Points there.

-

End of Spring

-

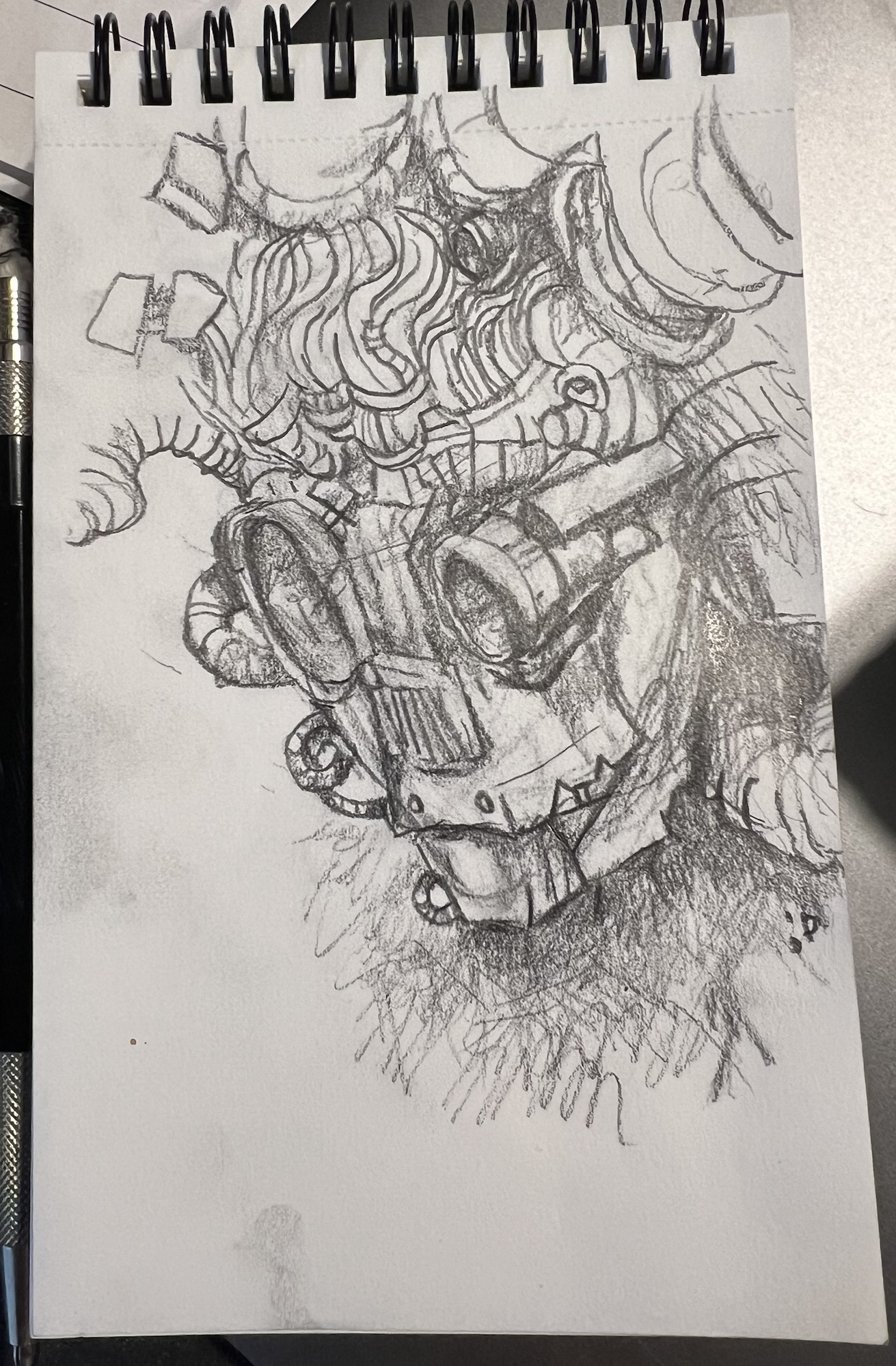

Talking down to gemini

The other reason to get my site working is to occasionally do this. Pencil, done while rebuilding this site before my birthday, 2024. Lowercase on the title intentional because humans know hierarchies.

-

My notes for bringing swamthings.org back by converting the messed up WordPress sub-directory multi-site to a single site with ddev and other stuff

Ok. Things are working as the title hints, but only sorta. I haven’t been online with any web thing for a long time in internet space-time. I’ve been doing other stuff, and getting some different perspectives, but that’s for future stories. These are the technical notes that I am adding to my collection as I…

-

In south Louisiana sometimes things just grow

-

Something simple to start from

I’ve written something like this before as I do some web testing thing. A quick little shift to a Gutenberg starter theme child for a bit.

-

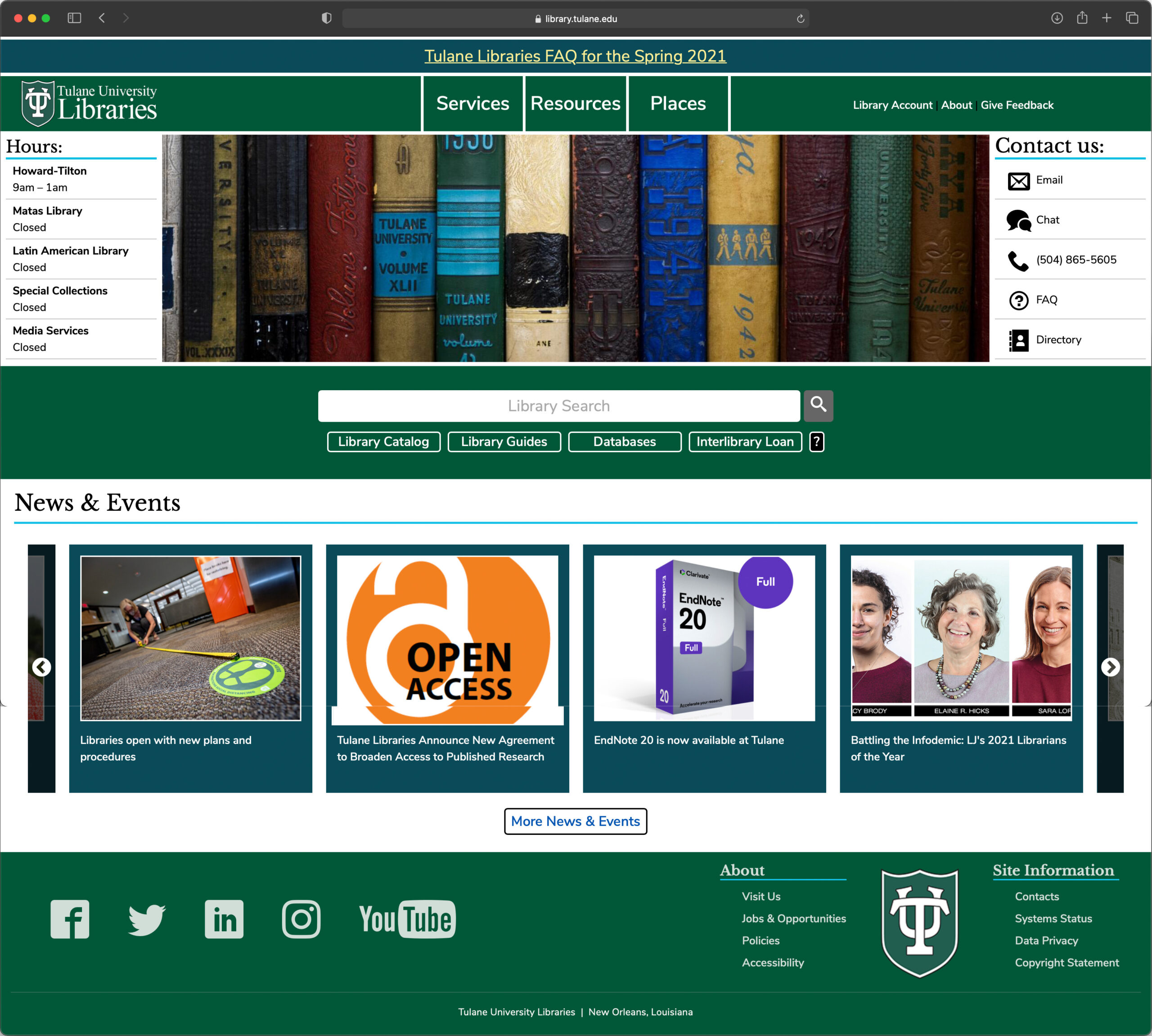

2021 Tulane University Library Website

The Tulane University Libraries website uses the Drupal CMS system. I work with other members of the Library IT staff to produce and maintain the website. This site was produced in 2021. The site was under additional iteration changes when this post was started.